Acknowledgements

We would like to acknowledge the generous help and support of the following in supplying us with damaged documents for testing of imaging techniques:

- Richard Linenthal, Bernard Quaritch Rare books

- Bill Marger, Philadelphia

- Pierre Joppen of Paulus Swaen old maps, Florida

- Doyle W Flowers Jr, www.hisbooks.net, Atlanta, Georgia

Digitizing primary sources for the DIAMM archive: why build a digital and not an analogue archive?

The main advantage of a digital image over analogue is the longevity of the digital medium (although this assumes a well-managed archiving strategy). Even carefully stored film or glossy pictures will become brittle in 20 years, and is also subject to physical damage that cannot be repaired. Analogue images cannot be accurately copied or reproduced into multiple generations without loss of data and degradation or alteration of the original material.

The fact that a digital image remains unchanged and can be copied precisely is both an advantage and a disadvantage, as the copyright issues that arise with exact copying are significantly more pertinent than with analogue images where copying is never completely exact. Because digital imaging technology is still relatively in its infancy, we have yet to see whether our preservation and file migration strategies will indeed leave an image unchanged over time, but the ability to preserve in this way is inherent to the digital medium.

A second advantage of the digital image is its ability to reproduce the original with far more exactitude than the analogue image can. Digital capture at resolutions employed by DIAMM (144 Megapixels), involves the acquisition of a far greater quantity of information than analogue. Most of this information is not visible to the naked eye except under extreme magnification, but this sort of magnification is possible in the digital medium. The basic digital image tolerates far more enlargement without graining than its analogue counterpart would.

Because analogue developing processes require the use of human judgement equally as much as any technical process to produce the final image, any close study of colours and ink bleed (for example) would be pointless unless conducted on the original source. Given the fragility of most of the sources from the Medieval period, this would be highly undesirable.

Why digitize primary and not secondary sources (surrogates)?

The question of the necessity of scanning from the original source rather than a surrogate (a slide or photograph) frequently arises: it is certainly possible to obtain very high-resolution scans of surrogates without having any recourse to the original. However, a good scan of a photograph or slide is still only a scan of a secondary image, and any deficiencies in that source will only be carried forward into the digital copy. Enlargement of digital scans made from high-quality photographs emphasizes any deficiency in focus, since conventional photography does not allow the photographer to view his focussing at very high magnification. Even if the image is in focus, the grain resolution of the print medium causes degradation in the image quality when that is in turn scanned and enlarged.

Scanning from surrogates allows the introduction of a level of error caused by the original capture process, since between the primary source and the surrogate the potential for corruption of the original data has been introduced because a human operator has had to make decisions about focus and colour reproduction. Because our ability to perceive colour is often flawed, and always limited by our ability to perceive the full light spectrum, every layer of reproduction adds a level at which errors are introduced. The eye is also incapable of fine focus beyond a certain level, and it is this further level of definition that is so essential in studying manuscripts in the digital medium. A scan from a surrogate is only as good as the surrogate, not the original.

The first image below was scanned from a good colour photograph. The second image was digitized directly from the original source. Both images have been enlarged to pixel-for-pixel resolution on screen (i.e. full size).

Colour

When photo labs make a reprint either from a print or from its negative they are rarely, if ever, able to reproduce the colour of the original print precisely, because they rely both on human judgement and the use of chemical processes that are too inaccurately calibrated to be exactly reproduced.

The mechanism by which we perceive colour is still only partially understood, but one extraordinary ability of the brain, chromatic adaptation, which enables us to function normally in changing light conditions becomes a handicap when dealing with precise colour balancing. As an example, if you put on a pair of dark glasses, the lens will completely change the real colours reaching your eye. However, a split-second adjustment in the brain allows us to continue to recognise correctly and understand colours even through a very heavily tinted filter.

All capture devices from scanners to hand-held cameras require calibration to determine how the CCD responds to colours. Archival digital scanning work is done with consistent daylight balanced lighting: this increases the scan time but ensures a more accurate result. Since the light is continuous (unlike flash), exact calibration of the capture equipment can ensure a correct record of the lighting conditions.

Direct digital acquisition from the original source ensures both stability of the colour balance and the quality of the information, which remains true to the original because it is not subject to transformation from the analogue to the digital language. As long as the equipment used to view or print the image is correctly calibrated, and makes correct use of the embedded profile of the capture equipment, the user will see correct colour either on-screen or when the digital image is printed. No post-processing (unsharp masking to correct poor focus, level adjust to correct exposure, colour adjustment, de-skewing or other tweaking etc.) should be necessary on a correctly scanned or photographed image. If any post-processing is required, then the image has not been properly taken, and the work must be re-done.

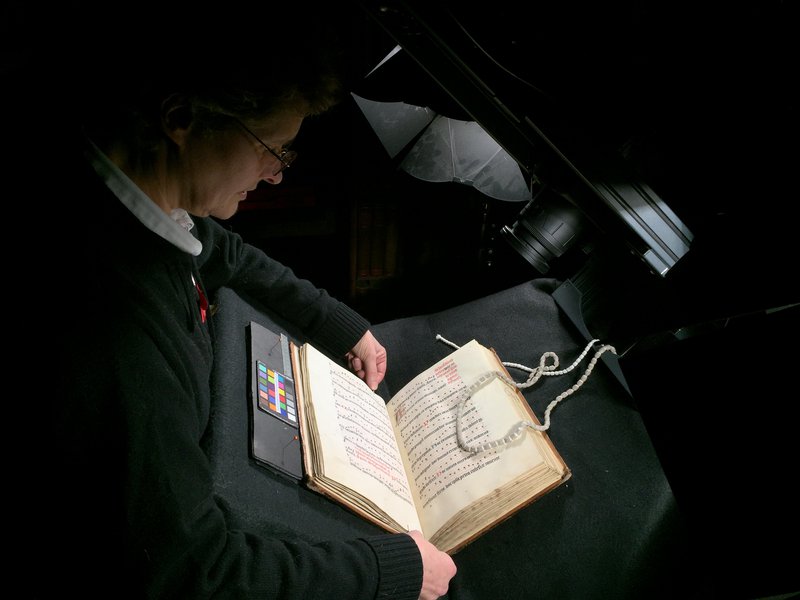

Image capture and archival process in DIAMM

DIAMM digital images are captured at very high resolution (usually 600 dpi or above at real size), the resulting uncompressed TIFF files being in the region of 200-340 MB in size. These are the archive images which are stored by Oxford University Computing Services, which uses a Hierarchical File Server system. This is a resource of the Oxford Humanities Computing department. A second deposit of the images is held by the HFS of the Arts and Humanities Data Service (UK). Each image includes an industry-standard colour patche and a rule showing the original dimensions. DIAMM stores extensive metadata about each individual image, including the type and quality of the light source and the equipment used for image capture in order to anticipate any future needs that may arise with improvements in technology and software. Capture metadata and Dublin Core information about the source is held in the TIF header, and also in the project database. Further detailed metadata describing the manuscript and its contents is recorded in the database, portions of which may be consulted through this website.

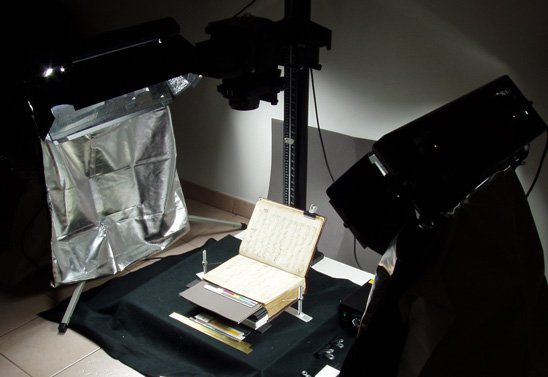

DIAMM is unique in providing a mobile imaging service with capture protocols in place that enable us to create digital images of exactly matching quality and consistency, anywhere in the world and in virtually any working conditions. Funding from the AHRB/AHRC and most recently the John Fell Research Fund in Oxford has enabled the project to acquire digital images of many of the most important medieval music manuscripts in Europe, as well as fragments which, because of their limited size, are rarely visited due to the difficulty of justifying the cost of travelling to see a single leaf as opposed to a multi-page manuscript. The libraries that own these sources would not have been able to afford to digitize them at this quality themselves at the time they were taken so the creation of these images has been a major work of conservation, as well as providing scholars with a set of images which they can acquire for themselves through each library. We now have two mobile studio setups using Manfred Mayer 'Traveller+' conservation cradles which give considerable flexibility for larger MSS. Our range of Blue Circle lenses allows us to shoot very small objects as well as very large ones.

A copy-stand system is also available for extremely large objects, allowing us to position the camera anything up to approx. 3 metres from the object. Because of the high resolution of the cameras, even at this distance we can obtain archive resolution. To get higher resolution (e.g. of maps or charters) these items may be better shot in sections, with an image of the full document for reference.

In cases of extreme (or even minor) damage to sources, copies of images can be used for virtual restoration or reconstruction processes with the permission of the owner. Since restoration is only done on the images, this is completely safe for the manuscript as it is not touched again. If a restoration process on an image fails or is unsatisfactory, the image is simply discarded, and the process is reworked with a new copy of the original image.

Camera specifications

Most high-street hand-held digital cameras have a capture resolution of around 15-20 megapixels. High-end digital cameras used by professional photographers have a capture resolution now approaching 20-30 megapixels, sufficient to produce a high-quality printed image at around A3 size. These cameras can capture images at speeds comparable to those of conventional cameras, and are referred to as single-shot cameras, as they use a rectangular CCD. Older technology allowed high-resolution imaging to be done with a scanning back or a smaller sensor that took four images that were then stitched together by the operating software.

DIAMM has two camera setups which can be run independently or in tandem.

- PhaseOne IQ1 80 Mpx single-shot camera on XF body. Resulting dpi depends on the size of the original document and position of the camera, but we always aim to fill the frame with the book so that no dpi is lost unnecessarily. Although now relatively 'older' technology, the IQ1 80 is the top of the PhaseOne IQ1 range, and produces impeccable images at archive-quality resolution (minimum 400 dpi at real size, ideally 600 dpi or more) for most sources.

- PhaseOne IQ4 150 Mpx single-shot camera on XF body. Resulting dpi depends on the size of the original document and position of the camera. As with the IQ1 80, we aim to utilise the whole frame where possible, and our wide range of lenses allow us to photograph at a huge variety of distances from the object, depending on its size.

- Lenses: we have a range of BlueCircle lenses that allow us to get extremely close for detail work or very small documents, or work from a distance for very large sources. The lenses that we use most often are 120mm/fp4.0 or 55mm/f2.8.

- Lighting: our normal static lights for RGB imaging (normal colour) are daylight balanced UV-free 3000K DeSisti C.D. 15B 150W CDM cold UV-free Broadlights emitting a very pure daylight-balanced light with almost undetectable levels of UV. They are specifically designed for imaging of delicate documents where light exposure is critical. We also have a set of Elinchrom Pro 500/500 flash units which are now used by many libraries and archives as they have been proven safe in terms of lux and light impact on the original source. You may specify which system you prefer.

- Special equipment: the project utilises low-frequency UV lighting heads for UV imaging (particularly useful for faded, scraped or rubbed documents) and Infra-red emitters for IR imaging (essential in dealing with ink acid burnthrough or other heat-type damage). We also have an interference bandpass filter system that allows us to undertake multi-spectral work (suitable for all types of damage, but particularly used in the recovery of palimpsests). MIS work requires some complex post-processing, so images are not usually delivered directly as shot.

All images are captured at the highest possible resolution in the hope that there will be no necessity to revisit the manuscript for photography in the foreseeable future.